ga.abs_value(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

Collective on the processor group inferred from the arguments.

Take the element-wise absolute value of the array.

ga.abs_value(int g_a, lo=None, hi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| 1D array-like | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like | hi | higher bound patch coordinates, exclusive | input |

Collective on the processor group inferred from the arguments.

Take the element-wise absolute value of the patch.

ga.acc(int g_a, buffer, lo=None, hi=None, alpha=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| array-like | buffer | the data to put | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| object | alpha | scale factor, cast to the appropriate type | input |

One-sided (non-collective).

Combines data from local array buffer with data in the global array section. The local array is assumed to be have the same number of dimensions as the global array.

global array section (lo[],hi[]) += *alpha * buffer

ret = ga.access(int g_a, lo=None, hi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

Local operation.

Provides access to the specified patch of a global array. Returns array of leading dimensions ld and a pointer to the first element in the patch. This routine allows to access directly, in place elements in the local section of a global array. It useful for writing new GA operations. A call to access normally follows a previous call to distribution that returns coordinates of the patch associated with a processor. You need to make sure that the coordinates of the patch are valid (test values returned from distribution).

Each call to access has to be followed by a call to either release or release_update. You can access in this fashion only local data. Since the data is shared with other processes, you need to consider issues of mutual exclusion.

Note: The entire local data is always accessed, but if a smaller patch is requested, an appropriately sliced ndarray is returned.

ret = ga.access_block(int g_a, int idx)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | idx | block index | input |

| ndarray | ret | array representing the block at index idx | output |

Local operation.

This function can be used to gain direct access to the data represented by a single block in a global array with a block-cyclic data distribution. The index idx is the index of the block in the array assuming that blocks are numbered sequentially in a column-major order. A quick way of determining whether a block with index idx is held locally on a processor is to calculate whether idx\%nproc equals the processor ID, where nproc is the total number of processors. Once the array has been returned, local data can be accessed as described in the documentation for access. Each call to access_block should be followed by a call to either release_block or release_update_block.

ret = ga.access_block_grid(int g_a, subscript)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| 1D array-like of ints | subscript | subscript of block in array | input |

| void** | ret | pointer to locally held bloc | output |

Local operation.

This function can be used to gain direct access to the data represented by a single block in a global array with a SCALAPACK block-cyclic data distribution that is based on an underlying processor grid. The subscript array contains the subscript of the block in the array of blocks. This subscript is based on the location of the block in a grid, each of whose dimensions is equal to the number of blocks that fit along that dimension. Once the index has been returned, local data can be accessed as described in the documentation for access. Each call to access_block_grid should be followed by a call to either release_block_grid or release_update_block_grid.

ret = ga.access_block(int g_a, int proc)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | proc | processor ID | input |

| ndarray | ret | locally held data | output |

Local operation.

This function can be used to gain access to the all the locally held data on a particular processor that is associated with a block-cyclic distributed array. Once the index has been returned, local data can be accessed as described in the documentation for access. The parameter len is the number of data elements that are held locally. The data inside this segment has a lot of additional structure so this function is not generally useful to developers. It is primarily used inside the GA library to implement other GA routines. Each call to access_block_segment should be followed by a call to either release_block_segment or release_update_block_segment.

ret = ga.access_ghost_element(int g_a, subscript)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| 1D array-like of ints | subscript | index of desired element | input |

| ndarray | ret | appropriately shaped, possibly non-contiguous ndarray corresponding to global array patch held locally on the processor | output |

Local operation.

This function can be used to return a pointer to any data element in the locally held portion of the global array and can be used to directly access ghost cell data. The array subscript refers to the local index of the element relative to the origin of the local patch (which is assumed to be indexed by (0,0,...)).

ret = ga.access_ghosts(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| ndarray | ret | appropriately shaped, possibly non-contiguous ndarray corresponding to global array patch held locally on the processor | output |

Local operation.

Provides access to the local patch of the global array. Returns leading dimension ld and and pointer for the data. This routine will provide access to the ghost cell data residing on each processor. Calls to access_ghosts should normally follow a call to distribution that returns coordinates of the visible data patch associated with a processor. You need to make sure that the coordinates of the patch are valid (test values returned from distribution).

You can only access local data.

ga.add(int g_a, int g_b, int g_c, alpha=None, beta=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| object | alpha | multiplier (converted to appropriate type) | input |

| object | beta | multiplier (converted to appropriate type) | input |

Collective on the processor group inferred from the arguments.

The arrays (which must be the same shape and identically aligned) are added together element-wise.

c = alpha * a + beta * b;

The result (c) may replace one of the input arrays (a/b).

ga.add_constant(int g_a, alpha)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| object | alpha | constant to add (converted to appropriate type) | input |

Collective on the processor group inferred from the arguments.

Add the constant pointed by alpha to each element of the array.

ga.add_constant(int g_a, alpha, lo=None, hi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| object | alpha | constant to add (converted to appropriate type) | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

Collective on the processor group inferred from the arguments.

Add the constant pointed by alpha to each element of the patch.

ga.add_diagonal(int g_a, int g_v)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | g_v | array handle | input |

Collective on the processor group inferred from the arguments.

Adds the elements of the vector g_v to the diagonal of this matrix g_a.

ga.add(int g_a, int g_b, int g_c, alpha=None, beta=None,

alo=None, ahi=None, blo=None, bhi=None, clo=None, chi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| object | alpha | multiplier (converted to appropriate type) | input |

| object | beta | multiplier (converted to appropriate type) | input |

| 1D array-like of ints | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of ints | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of ints | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of ints | bhi | higher bound patch coordinates of g_b, exclusive | input |

| 1D array-like of ints | clo | lower bound patch coordinates of g_c, inclusive | input |

| 1D array-like of ints | chi | higher bound patch coordinates of g_c, exclusive | input |

Collective on the processor group inferred from the arguments.

Patches of arrays (which must have the same number of elements) are added together element-wise.

c[ ][ ] = alpha * a[ ][ ] + beta * b[ ][ ]

ga.alloc_gatscat_buf(int nelems)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | nelems | maximum number of elements to scatter/gather | input |

Local operation.

This function can be used to enhance the performance when the gather/scatter operations are being called multiple times in succession. If the maximum number of elements being called in any gather/scatter operation is known prior to executing a code segment, then some internal buffers used in the gather/scatter operations can be allocated beforehand instead of at every individual call. This can result in substantial performance boosts in some cases. When the buffers are no longer needed they can be removed using the corresponding free call.

ret = ga.allocate(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| bool | ret | True if allocation of g_a was successful | output |

Collective on the processor group inferred from the arguments.

This function allocates the memory for the global array handle originally obtained using the GA_Create_handle function. At a minimum, the GA_Set_data function must be called before the memory is allocated. Other GA_Set_xxx functions can also be called before invoking this function.

Returns True if allocation of g_a was successful.

ret = ga.brdcst(buffer, int root=0)

| Type | Name | Description | Intent |

|---|---|---|---|

| 1D array-like of objects | buffer | the ndarray message (converted to the appropriate type) | input |

| int | root | the process which is sending | input |

Collective on the world processor group.

Broadcast from process root to all other processes a message of length lenbuf.

This is operation is provided only for convenience purposes: it is available regardless of the message-passing library that GA is running.

If the buffer is not contiguous, an error is raised. This operation is provided only for convenience purposes: it is available regardless of the message-passing library that GA is running with.

Returns: The buffer in case a temporary was passed in.

ga.check_handle(int g_a, char *message)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| char* | string | message string | input |

Local operation.

Check that the global array handle g_a is valid ... if not, call ga_error with the string provided and some more info.

ret = ga.cluster_nnodes()

Local operation.

This functions returns the total number of nodes that the program is running on. On SMP architectures, this will be less than or equal to the total number of processors.

ret = ga.cluster_nodeid()

Local operation.

This function returns the node ID of the process. On SMP architectures with more than one processor per node, several processes may return the same node id.

ret = ga.cluster_nprocs(int inode)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | inode | node id | input |

Local operation.

This function returns the number of processors available on node inode.

ret = ga.cluster_nodeid(int proc=-1)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | proc | process id | input |

Local operation.

This function returns the node ID of the specified process proc. On SMP architectures with more than one processor per node, several processes may return the same node id.

ret = ga.cluster_procid(int inode, int iproc)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | inode | node id | input |

| int | iproc | processor id | input |

Local operation.

This function returns the processor id associated with node inode and the local processor ID iproc. If node inode has N processors, then the value of iproc lies between 0 and N-1.

ret = ga.compare_distr(int g_a, int g_b)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | g_b | array handle | input |

| bool | ret | True if distributions are identical | output |

Collective on the processor group inferred from the arguments.

Compares distributions of two global arrays. Returns 0 if distributions are identical and 1 when they are not.

ga.copy(int g_a, int g_b)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle copying from | input |

| int | g_b | the array handle copying to | input |

Collective on the processor group inferred from the arguments.

Copies elements in array represented by g_a into the array represented by g_b. The arrays must be the same type, shape, and identically aligned.

For patch operations, the patches of arrays may be of different shapes but must have the same number of elements. Patches must be nonoverlapping (if g_a=g_b). Transposes are allowed for patch operations.

ga.copy(int g_a, int g_b, alo=None, ahi=None,

blo=None, bhi=None, int trans=False)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle copying from | input |

| int | g_b | the array handle copying to | input |

| 1D array-like of integers | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of integers | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of integers | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of integers | bhi | higher bound patch coordinates of g_b, exclusive | input |

| bool | trans | whether the transpose operator should be applied True=applied | input |

Collective on the processor group inferred from the arguments.

Copies elements in a patch of one array into another one. The patches of arrays may be of different shapes but must have the same number of elements. Patches must be non-overlapping (if g_a=g_b).

trans = `N' or `n' means that the transpose operator should

not be applied.

trans = `T' or `t' means that transpose operator should be applied.

g_a = ga.create(int gtype, dims, char *name='', chunk=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| str | name | a unique character string | input |

| int | type | data type e.g. C_DBL, C_INT, C_DCPL, etc. | input |

| 1D array-like of ints | dims | shape of array | input |

| 1D array-like of ints | chunk | each element specifies minimum size that given dimensions should be chunked up into | input |

| int | g_a | global array handle | output |

Collective on the default processor group.

Creates an ndim-dimensional array using the regular distribution model and returns an integer handle representing the array.

The array can be distributed evenly or not. The control over the distribution is accomplished by specifying chunk (block) size for all or some of array dimensions. For example, for a 2-dimensional array, setting chunk[0]=dim[0] gives distribution by vertical strips (chunk[0]*dims[0]); setting chunk[1]=dim[1] gives distribution by horizontal strips (chunk[1]*dims[1]). Actual chunks will be modified so that they are at least the size of the minimum and each process has either zero or one chunk. Specifying chunk[i] as less than 1 will cause that dimension to be distributed evenly.

As a convenience, when chunk is specified as NULL, the entire array is distributed evenly.

Return value: a non-zero array handle means the call was succesful.

g_a = ga.create(int gtype, dims, char *name='', chunk=None,

int pgroup=-1)

| Type | Name | Description | Intent |

|---|---|---|---|

| str | name | a unique character string | input |

| int | type | data type e.g. C_DBL, C_INT, C_DCPL, etc. | input |

| 1D array-like of ints | dims | shape of array | input |

| 1D array-like of ints | chunk | each element specifies minimum size that given dimensions should be chunked up into | input |

| int | pgroup | processor group handle | input |

| int | g_a | global array handle | output |

Collective on the default processor group.

Creates an ndim-dimensional array using the regular distribution model but with an explicitly specified processor group handle and returns an integer handle representing the array.

This call is essentially the same as the create call, except for the processor group handle p_handle. It can also be used to create mirrored arrays.

Return value: a non-zero array handle means the call was succesful.

g_a = ga.create_ghosts(int gtype, dims, width, char *name='',

chunk=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | gtype | data type (C_DBL,C_INT,C_DCPL,etc.) | input |

| 1D array-like of ints | dims | array of dimensions | input |

| 1D array-like of ints | width | array of ghost cell widths | input |

| char* | name | a unique character string | input |

| 1D array-like of ints | chunk | array of chunks, each element specifies minimum size that given dimensions should be chunked up into | input |

| int | global array handle | output |

Collective on the default processor group.

Creates an ndim-dimensional array with a layer of ghost cells around the visible data on each processor using the regular distribution model and returns an integer handle representing the array.

The array can be distributed evenly or not evenly. The control over the distribution is accomplished by specifying chunk (block) size for all or some of the array dimensions. For example, for a 2-dimensional array, setting chunk(1)=dim(1) gives distribution by vertical strips (chunk(1)*dims(1)); setting chunk(2)=dim(2) gives distribution by horizontal strips (chunk(2)*dims(2)). Actual chunks will be modified so that they are at least the size of the minimum and each process has either zero or one chunk. Specifying chunk(i) as < 1 will cause that dimension (i-th) to be distributed evenly. The width of the ghost cell layer in each dimension is specified using the array width(). The local data of the global array residing on each processor will have a layer width[n] ghosts cells wide on either side of the visible data along the dimension n.

Return value: a non-zero array handle means the call was successful.

g_a = ga.create_ghosts(int gtype, dims, width, char *name='',

chunk=None, int pgroup=-1)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | gtype | data type (C_DBL,C_INT,C_DCPL,etc.) | input |

| 1D array-like of ints | dims | array of dimensions | input |

| 1D array-like of ints | width | array of ghost cell widths | input |

| char* | name | a unique character string | input |

| 1D array-like of ints | chunk | array of chunks, each element specifies minimum size that given dimensions should be chunked up into | input |

| int | pgroup | processor group handle | input |

| int | global array handle | output |

Collective on the default processor group.

Creates an ndim-dimensional array with a layer of ghost cells around the visible data on each processor using the regular distribution model and an explicitly specified processor list and returns an integer handle representing the array.

This call is essentially the same as the NGA_Create_ghosts call, except for the processor list handle p_handle. It can be used to create mirrored arrays.

Return value: a non-zero array handle means the call was successful.

g_a = ga.create_ghosts_irreg(int gtype, dims, width, block, map,

char *name='')

| Type | Name | Description | Intent |

|---|---|---|---|

| int | gtype | data type (C_DBL,C_INT,C_DCPL,etc.) | input |

| 1D array-like of ints | dims | array shape | input |

| 1D array-like of ints | width | ghost cell widths per dimension | input |

| 1D array-like of ints | block | no. of blocks each dimension is divided into | input |

| 1D array-like of ints | map | starting index for for each block; len(map) is a sum all elements of block array | input |

| char* | name | a unique character string | input |

| int | array handle | output |

Collective on the default processor group.

Creates an array with ghost cells by following the user-specified distribution and returns an integer handle representing the array.

The distribution is specified as a Cartesian product of distributions for each dimension.

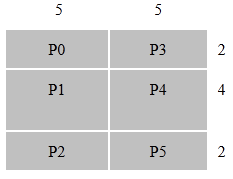

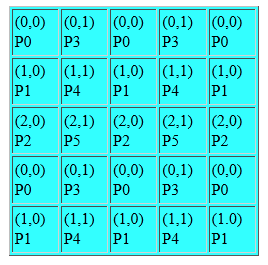

Figure "crghostir" below demonstrates distribution of a 2-dimensional array 8x10 on 6 (or more) processors.

nblock(2)={3,2}, the size of map array is s=5 and the array map contains the following elements map={1,3,7, 1, 6}. The distribution is nonuniform because, P1 and P4 get 20 elements each and processors P0, P2, P3, and P5 only 10 elements each.

The array width is used to control the width of the ghost cell boundary around the visible data on each processor. The local data of the Global Array residing on each processor will have a layer width[n] ghosts cells wide on either side of the visible data along the dimension n.

Return value: a non-zero array handle means the call was succesful.

g_a = ga.create_ghosts_irreg(int gtype, dims, width, block, map,

char *name='', int pgroup=-1)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | gtype | data type (C_DBL,C_INT,C_DCPL,etc.) | input |

| 1D array-like of ints | dims | array shape | input |

| 1D array-like of ints | width | ghost cell widths per dimension | input |

| 1D array-like of ints | block | no. of blocks each dimension is divided into | input |

| 1D array-like of ints | map | starting index for for each block; len(map) is a sum all elements of block array | input |

| char* | name | a unique character string | input |

| int | pgroup | processor group handle | input |

| int | array handle | output |

Collective on the default processor group.

Creates an array with ghost cells by following the user-specified distribution and returns an integer handle representing the array. The user can specify that the array is created on a particular processor group.

This call is similar to the create_ghosts_irreg call.

Return value: a non-zero array handle means the call was succesful.

ret = ga.create_handle()

Collective on the default processor group.

This function returns a Global Array handle that can then be used to create a new Global Array. This is part of a new API for creating Global Arrays that is designed to replace the old interface built around the NGA_Create_xxx calls. The sequence of operations is to begin with a call to GA_Greate_handle to get a new array handle. The attributes of the array, such as dimension, size, type, etc., can then be set using successive calls to the GA_Set_xxx subroutines. When all array attributes have been set, the GA_Allocate subroutine is called and the Global Array is actually created and memory for it is allocated.

g_a = ga.create_irreg(int gtype, dims, block, map, char *name='')

| Type | Name | Description | Intent |

|---|---|---|---|

| int | gtype | data type (C_DBL,C_INT,C_DCPL,etc.) | input |

| 1D array-like of ints | dims | array of dimension values | input |

| 1D array-like of ints | block | no. of blocks each dimension is divided into | input |

| 1D array-like of ints | map | starting index for for each block; len(map) is a sum all elements of block array | input |

| char* | name | a unique character string | input |

| int | array handle | output |

Collective on the default processor group.

Creates an array by following the user-specified distribution and returns an integer handle representing the array.

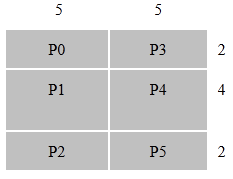

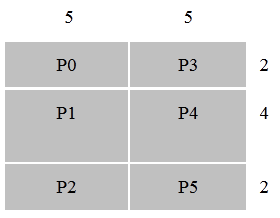

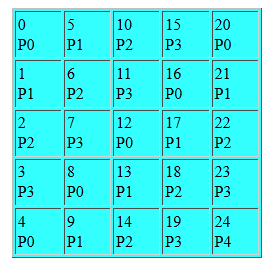

The distribution is specified as a Cartesian product of distributions for each dimension. The array indices start at 0. For example, Figure "crirreg" below demonstrates the distribution of a 2-dimensional 8x10 array on 6 (or more) processors.

nblock(2)={3,2}, the size of the map array is s=5 and the array map contains the following elements map={1,3,7, 1, 6}. The distribution is nonuniform because P1 and P4 get 20 elements each and processors P0, P2, P3, and P5 only 10 elements each.

Return value: a non-zero array handle means the call was succesful.

g_a = ga.create_irreg(int gtype, dims, block, map, char *name='',

int pgroup=-1)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | gtype | data type (C_DBL,C_INT,C_DCPL,etc.) | input |

| 1D array-like of ints | dims | array of dimension values | input |

| 1D array-like of ints | block | no. of blocks each dimension is divided into | input |

| 1D array-like of ints | map | starting index for for each block; len(map) is a sum all elements of block array | input |

| char* | name | a unique character string | input |

| int | pgroup | processor group handle | input |

| int | array handle | output |

Collective on the default processor group.

Creates an array by following the user-specified distribution and an explicitly specified processor group handle and returns an integer handle representing the array.

This call is essentially the same as the create_irreg call, except for the processor group handle p_handle. It can also be used to create arrays on mirrored arrays.

Return value: a non-zero array handle means the call was succesful.

ret = ga.create_mutexes(int number)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | number | number of mutexes in mutex array | input |

| bool | ret | True if successful | output |

Collective on the world processor group.

Creates a set containing the number of mutexes. Returns 0 if the operation succeeded or 1 if it has failed. Mutex is a simple synchronization object used to protect Critical Sections. Only one set of mutexes can exist at a time. An array of mutexes can be created and destroyed as many times as needed.

Mutexes are numbered: 0, ..., number-1.

Returns: True on success, False on failure

ga.destroy(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

Collective on the processor group inferred from the arguments.

Deallocates the array and frees any associated resources.

ret = ga.destroy_mutexes()

| Type | Name | Description | Intent |

|---|---|---|---|

| int | ret | True if successful | output |

Collective on the world processor group.

Destroys the set of mutexes created with ga_create_mutexes. Returns 0 if the operation succeeded or 1 when failed.

ret = ga.diag(int g_a, int g_s, int g_v, evalues=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle of the matrix to diagonalize | input |

| int | g_s | the array handle of the metric | input |

| int | g_v | the array handle to return evecs | input |

| ndarray | evalues | local array of appropriate type to return evals | input |

| ndarray | ret | evals as a local array of appropriate type | output |

Collective on the processor group inferred from the arguments.

Solve the generalized eigenvalue problem returning all eigenvectors and values in ascending order. The input matrices are not overwritten or destroyed.

All eigen-values as a vector in ascending order.

ret = ga.diag_reuse(int control, int g_a, int g_s, int g_v, evalues=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | control | 0 indicates first call to the eigensolver; >0 consecutive calls (reuses factored g_s); <0 only erases factorized g_s; g_v and eval unchanged (should be called after previous use if another eigenproblem, i.e., different g_a and g_s, is to be solved) | input |

| int | g_a | the array handle of the matrix to diagonalize | input |

| int | g_s | the array handle of the metric | input |

| int | g_v | the array handle to return evecs | input |

| ndarray | evalues | local array of appropriate type to return evals | input |

| ndarray | ret | evals as a local array of appropriate type | output |

Collective on the processor group inferred from the arguments.

Solve the generalized eigenvalue problem returning all eigenvectors and values in ascending order. Recommended for REPEATED calls if g_s is unchanged. Values of the control flag:

value action/purpose

0 indicates first call to the eigensolver

>0 consecutive calls (reuses factored g_s)

<0 only erases factorized g_s; g_v and eval unchanged

(should be called after previous use if another

eigenproblem, i.e., different g_a and g_s, is to

be solved)

The input matrices are not destroyed.

Returns: All eigen-values as a vector in ascending order.

ret = ga.diag_std(int g_a, int g_v, evalues=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle of the matrix to diagonalize | input |

| int | g_v | the array handle to return evecs | input |

| ndarray | evalues | local array of appropriate type to return evals | input |

| ndarray | ret | evals as a local array of appropriate type | output |

Collective on the processor group inferred from the arguments.

Solve the standard (non-generalized) eigenvalue problem returning all eigenvectors and values in the ascending order. The input matrix is neither overwritten nor destroyed.

Returns: all eigenvectors via the g_v global array, and eigenvalues as an array in ascending order

lo,hi = ga.distribution(int g_a, int iproc=-1)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | iproc | process number | input |

| 1D ndarray of ints | lo | array of starting indices for array section | output |

| 1D ndarray of ints | hi | array of ending indices for array section | output |

Local operation.

This function returns the bounding indices of the block owned by the process iproc. These indices are inclusive.

Return the distribution given to iproc. If iproc is not specified, then ga.nodeid() is used. The range is returned as -1 for lo and -2 for hi if no elements are owned by iproc.

ret = ga.dot(int g_a, int g_b)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise dot product of the two arrays which must be of the same types and same number of elements.

Return value = SUM_ij a(i,j)*b(i,j)

ret = ga.dot(int g_a, int g_b,

alo=None, ahi=None, blo=None, bhi=None,

int ta=False, int tb=False)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| 1D array-like of ints | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of ints | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of ints | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of ints | bhi | higher bound patch coordinates of g_b, exclusive | input |

| bool | ta | whether the transpose operator should be applied to g_a True=applied | input |

| bool | tb | whether the transpose operator should be applied to g_b True=applied | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise dot product of the two (possibly transposed) patches which must be of the same type and have the same number of elements.

ret = ga.duplicate(int g_a, char *name='')

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | integer handle for reference array | input |

| char* | array_name | a character string | input |

| int | ret | integer handle for duplicated array | output |

Collective on the processor group inferred from the arguments.

Creates a new array by applying all the properties of another existing array. It returns an array handle.

Return value: a non-zero array handle means the call was succesful.

ga.elem_divide(int g_a, int g_b, int g_c)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise quotient of the two arrays which must be of the same types and same number of elements. For two-dimensional arrays,

c(i,j) = a(i,j)/b(i,j)

The result (c) may replace one of the input arrays (a/b). If one of the elements of array g_b is zero, the quotient for the element of g_c will be set to GA_NEGATIVE_INFINITY.

ga.elem_divide(int g_a, int g_b, int g_c,

alo=None, ahi=None,

blo=None, bhi=None,

clo=None, chi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| 1D array-like of integers | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of integers | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of integers | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of integers | bhi | higher bound patch coordinates of g_b, exclusive | input |

| 1D array-like of integers | clo | lower bound patch coordinates of g_c, inclusive | input |

| 1D array-like of integers | chi | higher bound patch coordinates of g_c, exclusive | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise quotient of the two patches which must be of the same types and same number of elements. For two-dimensional arrays,

c(i,j) = a(i,j)/b(i,j)

The result (c) may replace one of the input arrays (a/b).

ga.elem_maximum(int g_a, int g_b, int g_c)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise maximum of the two arrays which must be of the same types and same number of elements. For two dimensional arrays,

c(i,j) = max{a(i,j), b(i,j)}

The result (c) may replace one of the input arrays (a/b).

ga.elem_maximum(int g_a, int g_b, int g_c,

alo=None, ahi=None,

blo=None, bhi=None,

clo=None, chi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| 1D array-like of integers | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of integers | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of integers | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of integers | bhi | higher bound patch coordinates of g_b, exclusive | input |

| 1D array-like of integers | clo | lower bound patch coordinates of g_c, inclusive | input |

| 1D array-like of integers | chi | higher bound patch coordinates of g_c, exclusive | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise maximum of the two patches which must be of the same types and same number of elements. For two-dimensional noncomplex arrays,

c(i,j) = max{a(i,j), b(i,j)}

If the data type is complex, then

c(i,j).real = max{ |a(i,j)|, |b(i,j)| } while c(i,j).image = 0.

The result (c) may replace one of the input arrays (a/b).

ga.elem_minimum(int g_a, int g_b, int g_c)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise minimum of the two arrays which must be of the same types and same number of elements. For two dimensional arrays,

c(i,j) = min{a(i,j), b(i,j)}

The result (c) may replace one of the input arrays (a/b).

ga.elem_minimum(int g_a, int g_b, int g_c,

alo=None, ahi=None,

blo=None, bhi=None,

clo=None, chi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| 1D array-like of integers | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of integers | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of integers | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of integers | bhi | higher bound patch coordinates of g_b, exclusive | input |

| 1D array-like of integers | clo | lower bound patch coordinates of g_c, inclusive | input |

| 1D array-like of integers | chi | higher bound patch coordinates of g_c, exclusive | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise minimum of the two patches which must be of the same types and same number of elements. For two-dimensional of noncomplex arrays,

c(i,j) = min{a(i,j), b(i,j)}

If the data type is complex, then

c(i,j).real = min{ |a(i,j)|, |b(i,j)| } while c(i,j).image = 0.

The result (c) may replace one of the input arrays (a/b).

ga.elem_multiply(int g_a, int g_b, int g_c)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise product of the two arrays which must be of the same types and same number of elements. For two-dimensional arrays,

c(i, j) = a(i,j)*b(i,j)

The result (c) may replace one of the input arrays (a/b).

ga.elem_multiply(int g_a, int g_b, int g_c,

alo=None, ahi=None,

blo=None, bhi=None,

clo=None, chi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| 1D array-like of integers | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of integers | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of integers | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of integers | bhi | higher bound patch coordinates of g_b, exclusive | input |

| 1D array-like of integers | clo | lower bound patch coordinates of g_c, inclusive | input |

| 1D array-like of integers | chi | higher bound patch coordinates of g_c, exclusive | input |

Collective on the processor group inferred from the arguments.

Computes the element-wise product of the two patches which must be of the same types and same number of elements. For two-dimensional arrays,

c(i,j) = a(i,j)*b(i,j)

The result (c) may replace one of the input arrays (a/b).

ga.error(char *message, int code=1)

| Type | Name | Description | Intent |

|---|---|---|---|

| char* | message | string to print | input |

| int | code | code to print | input |

Local operation.

To be called in case of an error. Print an error message and an integer value that represents an error code as well as releasing some system resources. This is the required way of aborting the program execution.

ga.fence()

One-sided (non-collective).

Blocks the calling process until all the data transfers corresponding to GA operations called after ga_init_fence complete. For example, since ga_put might return before the data reaches the final destination, ga_init_fence and ga_fence allow the process to wait until the data tranfer is fully completed:

ga_init_fence();

ga_put(g_a, ...);

ga_fence();

ga_fence must be called after ga_init_fence. A barrier, ga_sync, assures the completion of all data transfers and implicitly cancels all outstanding ga_init_fence calls. ga_init_fence and ga_fence must be used in pairs, multiple calls to ga_fence require the same number of corresponding ga_init_fence calls. ga_init_fence/ga_fence pairs can be nested.

ga_fence works for multiple GA operations. For example:

ga_init_fence();

ga_put(g_a, ...);

ga_scatter(g_a, ...);

ga_put(g_b, ...);

ga_fence();

The calling process will be blocked until data movements initiated by two calls to ga_put and one ga_scatter complete.

ga.fill(int g_a, value)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| object | value | object of the value of appropriate type (double/double complex/long) that matches array type | input |

Collective on the processor group inferred from the arguments.

Assign a single value to all elements in the array.

ga.fill(int g_a, value, lo=None, hi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| object | value | object of the value of appropriate type (double/double complex/long) that matches array type | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

Collective on the processor group inferred from the arguments.

Fill the patch of g_a with value of `val'

void ga.free_gatscat_buf()

Local operation.

This function is used to free up internal buffers that were set with the corresponding allocation call. The buffers can be used to improve performance if multiple calls are being made to the gather/scatter operations.

ret = ga.gather(int g_a, subsarray, ndarray values=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | global array handle | input |

| 1D or 2D array-like of ints | subsarray | array of subscripts for each element | input |

| 1D array-like | values | buffer to be overwritten | input |

| 1D array-like | ret | array containing values | output |

One-sided (non-collective).

Gathers array elements from a global array into a local array. The contents of the input arrays (v, subsArray) are preserved.

for (k=0; k<= n; k++)

{v[k] = a[subsArray[k][0]][subsArray[k][1]][subsArray[k][2]]...;}

subsarray will be converted to an ndarray if it is not one already. A two-dimensional array is allowed so long as its shape is (n,ndim) where n is the number of elements to gather and ndim is the number of dimensions of the target array. Also, subsarray must be contiguous.

For example, if the subsarray were two-dimensional:

for k in range(n):

v[k] = g_a[subsarray[k,0],subsarray[k,1],subsarray[k,2]...]

For example, if the subsarray were one-dimensional:

for k in range(n):

base = n*ndim

v[k] = g_a[subsarray[base+0],subsarray[base+1],subsarray[base+2]...]

ga.gemm(int ta, int tb, int64_t m, int64_t n, int64_t k,

alpha, int g_a, int g_b, beta, int g_c)

ta (bool) - transpose operator

tb (bool) - transpose operator

m (int) - number of rows of op(A) and of matrix C

n (int) - number of columns of op(B) and of matrix C

k (int) - number of columns of op(A) and rows of matrix op(B)

alpha (object) - scale factor

g_a (int) - handle to input array

g_b (int) - handle to input array

beta (object) - scale factor

g_c (int) - handle to output array

| Type | Name | Description | Intent |

|---|---|---|---|

| char | ta | transpose operator for g_a | input |

| char | tb | transpose operator for g_b | input |

| int | m | number of rows of op(A) and of matrix C | input |

| int | n | number of columns of op(B) and of matrix C | input |

| int | k | number of columns of op(A) and rows of matrix op(B) | input |

| object | alpha | scale factor | input |

| int | g_a | handle to input arrays | input |

| int | g_b | handle to input arrays | input |

| object | beta | scale factor | input |

| int | g_c | handle to output array | input |

Collective on the processor group inferred from the arguments.

Performs one of the matrix-matrix operations:

C := alpha*op( A )*op( B ) + beta*C,

where op( X ) is one of

op( X ) = X or op( X ) = X',

alpha and beta are scalars, and A, B, and C are matrices, with op( A ) an m by k matrix, op( B ) a k by n matrix and C an m by n matrix.

On entry, transa specifies the form of op( A ) to be used in the matrix multiplication as follows:

ta = `N' or `n', op( A ) = A.

ta = `T' or `t', op( A ) = A'.

ret = ga.get(int g_a, lo=None, hi=None, ndarray buffer=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| ndarray | buffer | an ndarray of the appropriate type, large enough to hold lo,hi | input |

| ndarray | ret | local buffer array where the data goes to | output |

One-sided (non-collective).

Copies data from global array section to the local array buffer. The local array is assumed to be have the same number of dimensions as the global array. Any detected inconsistencies or errors in the input arguments are fatal.

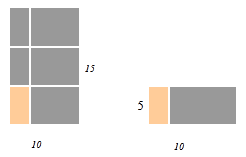

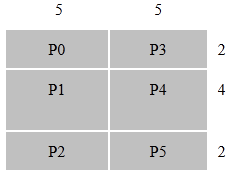

Example: For the ga_get operation transfering data from the [10:14, 0:4] section of 2-dimensional 15x10 global array into a local buffer 5x10 array we have:

lo={10,0,} hi={14,4}, ld={10}

Figure "get" below shows the GET operation.

Return: The local array buffer.

If buffer is None, then a new ndarray will be allocated. Otherwise, the same buffer that was passed in will be updated and returned.

num_blocks,block_dims = get_block_info(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| 1D ndarray of ints | num_blocks | number of blocks along each axis | output |

| 1D ndarray of ints | block_dims | dimensions of block | output |

Local operation.

This subroutine returns information about the block-cyclic distribution associated with global array g_a. The number of blocks along each of the array axes are returned in the array num_blocks and the dimensions of the individual blocks, specified in the GA_Set_block_cyclic or GA_Set_block_cyclic_proc_grid subroutines, are returned in block_dims.

This is a local function.

ret = ga.get_debug()

Local operation.

This function returns the value of an internal flag in the GA library whose value can be set using the GA_Set_debug subroutine.

ga.get_diag(int g_a, int g_v)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | g_v | array handle | input |

Collective on the processor group inferred from the arguments.

Inserts the diagonal elements of this matrix g_a into the vector g_v.

ret = ga.get_ghost_block(int g_a, lo=None, hi=None, ndarray buffer=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| ndarray | buffer | an ndarray of the appropriate type, large enough to hold lo,hi | input |

| ndarray | ret | local buffer array where the data goes to | output |

One-sided (non-collective).

This operation behaves similarly to the GA Get operation and will default to a standard Get if the global array has no ghost cells or the request does not fit into the ghost cell region of the calling processor. It is easier to use than the NGA_Access_ghosts function but it may be slower and requires more memory. The operation will copy data from the locally held portions of the global array, including the ghost cells, if the requested block falls within the region defined by visible block held by the process plus the ghost cell region. For example, if the process holds the visible block [2:8, 2:8] with a ghost cell width that is one element deep, then a request for the block [1:9,1:9] will use the local ghost cells to fill the local buffer. In this case, the data transfer is completely local. If the request is for a block such as [1:10,1:10], which would require data from another process, then the NGA_Get_ghost_block call reverts to an ordinary NGA_Get operation and ignores the locally held ghost data.

Return: The local array buffer.

ret = ga.gop(X, char *op)

ret = ga.gop_add(X)

ret = ga.gop_multiply(X)

ret = ga.gop_max(X)

ret = ga.gop_min(X)

ret = ga.gop_absmax(X)

ret = ga.gop_absmin(X)

| Type | Name | Description | Intent |

|---|---|---|---|

| 1D array-like | X | elements | input |

| char* | op | operator | input |

| ndarray | ret | result after numpy.asarray(X) followed by the operation | output |

Collective on the world processor group.

Global OPeration.

X(1:N) is a vector present on each process. GOP `sums' elements of X accross all nodes using the commutative operator OP. The result is broadcast to all nodes. Supported operations include `+', `*', `max', `min', `absmax', `absmin'. The use of lowerecase for operators is necessary.

This operation is provided only for convenience purposes: it is available regardless of the message-passing library that GA is running with.

ret = ga.has_ghosts(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | ret | True if array has ghost cells | output |

Collective on the processor group inferred from the arguments.

This function returns 1 if the global array has some dimensions for which the ghost cell width is greater than zero, it returns 0 otherwise.

ga.init_fence()

Local operation.

Initializes tracing of the completion status of data movement operations.

from ga4py import ga

Collective on the processor group inferred from the arguments.

Allocate and initialize internal data structures in Global Arrays.

ga.initialize_ltd(size_t limit)

| Type | Name | Description | Intent |

|---|---|---|---|

| size_t | limit | amount of memory in bytes per process | input |

Collective on the processor group inferred from the arguments.

Allocate and initialize internal data structures and set the limit for memory used in Global Arrays. The limit is per process: it is the amount of memory that the given processor can contribute to collective allocation of Global Arrays. It does not include temporary storage that GA might be allocating (and releasing) during execution of a particular operation.

*limit < 0 means "allow unlimited memory usage" in which case this operation is equivalent to GA_initialize.

type,shape = ga.inquire(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| int | type | data type | output |

| 1D ndarray of ints | shape | shape of array g_a | output |

Local operation.

Returns data type and dimensions of the array.

ret = ga.inquire_memory()

Returns the amount of memory (in bytes) used in the allocated global arrays on the calling processor.

ret = inquire_name(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

Local operation.

Returns the name of an array represented by the handle g_a.

ret = ga.is_mirrored(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| bool | ret | True/False | output |

Local operation.

This subroutine checks if the array is a mirrored array or not. Returns 1 if it is a mirrored array, else it returns 0.

ret = ga.llt_solve(int g_a, int g_b)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | coefficient matrix | input |

| int | g_b | rhs/solution matrix | input |

| int | ret | 0 if successful | output |

Collective on the processor group inferred from the arguments.

Solves a system of linear equations

A * X = B

using the Cholesky factorization of an NxN double precision symmetric positive definite matrix A (represented by handle g_a). On successful exit B will contain the solution X.

It returns:

= 0 : successful exit

> 0 : the leading minor of this order is not positive

definite and the factorization could

not be completed.

ret = ga.locate(int g_a, subscript)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| 1D array-like of ints | subscript | element subscript | input |

| int | ret | process ID owning the element at subscript | output |

Local operation.

Return the GA compute process ID that 'owns' the data. If any element of subscript[] is out of bounds "-1" is returned.

map,procs = locate_region(int g_a, lo, hi)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | global array handle | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| 2D ndarray of ints | map | array with mapping information; map.shape = 2,ndim | output |

| 1D ndarray of ints | procs | list of processes that own a part of array section | output |

Local operation.

Return a list of the GA processes ID that `own' the data. Parts of the specified patch might be actually `owned' by several processes. If lo/hi are out of bounds "0" is returned, otherwise the return value is equal to the number of processes that hold the data.

ga.lock(int mutex)

One-sided (non-collective).

Locks a mutex object identified by the mutex number. It is a fatal error for a process to attempt to lock a mutex which was already locked by this process.

ga.lu_solve(int g_a, int g_b, bint trans=False)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle for the coefficient matrix | input |

| int | g_b | the array handle for the solution matrix | input |

| bool | trans | transpose (True) or not transpose (False) | input |

Collective on the processor group inferred from the arguments.

Solve the system of linear equations op(A)X = B based on the LU factorization.

op(A) = A or A' depending on the parameter trans:

trans = `N' or `n' means that the transpose operator should not be applied.

trans = `T' or `t' means that the transpose operator should be applied.

Matrix A is a general real matrix. Matrix B contains possibly multiple rhs vectors. The array associated with the handle g_b is overwritten by the solution matrix X.

ga.mask_sync(int first, int last)

Collective on the default processor group.

This subroutine can be used to remove synchronization calls from around collective operations. Setting the parameter first = .false. removes the synchronization prior to the collective operation, setting last = .false. removes the synchronization call after the collective operation. This call is applicable to all collective operations. It most be invoked before each collective operation.

matmul_patch(bint transa, bint transb, alpha, beta,

int g_a, alo, ahi,

int g_b, blo, bhi,

int g_c, clo, chi)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a, g_b, g_c | array handles | input |

| 1D array-like of ints | alo, ahi | patch of g_a | input |

| 1D array-like of ints | blo, bhi | patch of g_b | input |

| 1D array-like of ints | clo, chi | patch of g_c | input |

| object | alpha, beta | scale factors | input |

| bool | transa, transb | transpose operators | input |

Collective on the processor group inferred from the arguments.

ga_matmul_patch is a patch version of ga_dgemm and comes in 2-D and N-D versions. The 2-D interface performs the operation:

C[cilo:cihi,cjlo:cjhi] := alpha* AA[ailo:aihi,ajlo:ajhi] *

BB[bilo:bihi,bjlo:bjhi] ) +

beta*C[cilo:cihi,cjlo:cjhi],

where AA = op(A), BB = op(B), and op(X) is one of

op(X) = X or op(X) = X',

Valid values for transpose arguments: 'n', 'N', 't', 'T'. It works for both double and double complex data tape.

nga_matmul_patch is a N-dimensional patch version of ga_dgemm and is similar to the 2-D interface:

C[clo[]:chi[]] := alpha* AA[alo[]:ahi[]] *

BB[blo[]:bhi[]] ) + beta*C[clo[]:chi[]],

ga.median(int g_a, int g_b, int g_c, int g_m)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| int | g_m | the array handle for the result | input |

Collective on the processor group inferred from the arguments.

Computes the componentwise Median of three arrays g_a, g_b, and g_c, and stores the result in this array g_m. The result (m) may replace one of the input arrays (a/b/c).

ga.median(int g_a, int g_b, int g_c, int g_m,

alo=None, ahi=None, blo=None, bhi=None,

clo=None, chi=None, mlo=None, mhi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| int | g_b | the array handle | input |

| int | g_c | the array handle | input |

| int | g_m | the array handle for the result | input |

| 1D array-like of integers | alo | lower bound patch coordinates of g_a, inclusive | input |

| 1D array-like of integers | ahi | higher bound patch coordinates of g_a, exclusive | input |

| 1D array-like of integers | blo | lower bound patch coordinates of g_b, inclusive | input |

| 1D array-like of integers | bhi | higher bound patch coordinates of g_b, exclusive | input |

| 1D array-like of integers | clo | lower bound patch coordinates of g_c, inclusive | input |

| 1D array-like of integers | chi | higher bound patch coordinates of g_c, exclusive | input |

| 1D array-like of integers | mlo | lower bound patch coordinates of g_m, inclusive | input |

| 1D array-like of integers | mhi | higher bound patch coordinates of g_m, exclusive | input |

Collective on the processor group inferred from the arguments.

Computes the componentwise Median of three patches g_a, g_b, and g_c, and stores the result in this patch g_m. The result (m) may replace one of the input patches (a/b/c).

ret = ga.memory_avail()

Local operation.

Returns amount of memory (in bytes) left for allocation of new global arrays on the calling processor.

Note: If GA_uses_ma returns true, then GA_Memory_avail returns the lesser of the amount available under the GA limit and the amount available from MA (according to ma_inquire_avail operation). If no GA limit has been set, it returns what MA says is available.

If ( !GA_Uses_ma() && !GA_Memory_limited() ) returns < 0, indicating that the bound on currently available memory cannot be determined.

ret = ga.memory_limited()

Local operation.

Indicates if limit is set on memory usage in Global Arrays on the calling processor. "1" means "yes", "0" means "no".

Returns: True for "yes", False for "no"

ga.merge_distr_patch(int g_a, alo, ahi, int g_b, blo, bhi)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| 1D array-like of integers | alo | g_a patch coordinate | input |

| 1D array-like of integers | ahi | g_a patch coordinate | input |

| int | g_b | array handle | input |

| 1D array-like of integers | blo | g_b patch coordinate | input |

| 1D array-like of integers | bhi | g_b patch coordinate | input |

Collective on the processor group inferred from the arguments.

This function merges all copies of a patch of a mirrored array (g_a) into a patch in a distributed array (g_b).

ga.merge_mirrored(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handles | input |

Collective on the processor group inferred from the arguments.

This subroutine merges mirrored arrays by adding the contents of each array across nodes. The result is that each mirrored copy of the array represented by g_a is the sum of the individual arrays before the merge operation. After the merge, all mirrored arrays are equal.

nbhandle = ga.acc(int g_a, buffer, lo=None, hi=None, alpha=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| array-like | buffer | the data to put | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| object | alpha | scale factor, cast to the appropriate type | input |

| ga_nbhdl_t | nbhandle | nonblocking handle | output |

One-sided (non-collective).

A non-blocking version of the blocking accumulate operation. The accumulate operation can be completed locally by making a call to the wait (e.g., NGA_NbWait) routine.

Non-blocking version of ga.acc.

The accumulate operation can be completed locally by making a call to the ga.nbwait() routine.

Combines data from buffer with data in the global array patch.

The buffer array is assumed to be have the same number of dimensions as the global array. If the buffer is not contiguous, a contiguous copy will be made.

global array section (lo[],hi[]) += alpha * buffer

ret,nbhandle = ga.nbget(int g_a, lo=None, hi=None, ndarray buffer=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| ndarray | buffer | an ndarray of the appropriate type, large enough to hold lo,hi | input |

| ndarray | ret | local buffer array where the data goes to | input/output |

| ga_nbhdl_t | nbhandle | non-blocking handle | output |

One-sided (non-collective).

A non-blocking version of the blocking get operation. The get operation can be completed locally by making a call to the wait (e.g., NGA_NbWait) routine.

Copies data from global array section to the local array buffer.

The local array is assumed to be have the same number of dimensions as the global array. Any detected inconsitencies/errors in the input arguments are fatal.

Returns: The local array buffer.

ret = ga.nblock(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| 1D ndarray of ints | ret | number of partitions for each dimension | output |

Local operation.

Given a distribution of an array represented by the handle g_a, returns the number of partitions of each array dimension.

nbhandle = ga.nbput(int g_a, buffer, lo=None, hi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| array-like | buffer | the data to put | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| ga_nbhdl_t | nbhandle | non-blocking handle | output |

One-sided (non-collective).

A non-blocking version of the blocking put operation. The put operation can be completed locally by making a call to the wait (e.g., NGA_NbWait) routine.

Copies data from local array buffer to the global array section.

The local array is assumed to be have the same number of dimensions as the global array. Any detected inconsitencies/errors in input arguments are fatal.

One-sided (non-collective).

This function tests a non-blocking one-sided operation for completion. If true, the function is completed locally and the buffer is available for either use or reuse, depending on the operation. Once this operation has returned true, there is no need to call nbwait on the handle. The test function is properly implemented only on the Progress Ranks and RMA runtimes. For other runtimes, it defaults to the non-blocking wait function and always returns true.

ga.nbwait(ga_nbhdl_t nbhandle)

One-sided (non-collective).

This function completes a non-blocking one-sided operation locally. Waiting on a nonblocking put or an accumulate operation assures that data was injected into the network and the user buffer can now be reused. Completing a get operation assures data has arrived into the user memory and is ready for use. The wait operation ensures only local completion. Not all runtimes support true non-blocking capability. For those that don't all operations are blocking and the wait function is a no-op.

Unlike their blocking counterparts, the nonblocking operations are not ordered with respect to the destination. Performance being one reason, the other reason is that ensuring ordering would incur additional and possibly unnecessary overhead on applications that do not require their operations to be ordered. For cases where ordering is necessary, it can be done by calling a fence operation. The fence operation is provided to the user to confirm remote completion if needed.

ret = ga.ndim(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

Local operation.

Returns the number of dimensions in the array represented by the handle g_a.

ret = ga.nnodes()

Local operation.

Returns the number of the GA compute (user) processes.

ret = ga.nodeid()

Local operation.

Returns the GA process id (0, ..., ga_Nnodes()-1) of the requesting compute process.

ret = ga.norm_infinity(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| double* | ret | matrix/vector infinity-norm value | output |

Collective on the processor group inferred from the arguments.

Computes the infinity-norm of the matrix or vector g_a.

ret = ga.norm1(int g_a)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | array handle | input |

| double | ret | matrix/vector 1-norm value | output |

Collective on the processor group inferred from the arguments.

Computes the 1-norm of the matrix or vector g_a.

Collective on the processor group inferred from the arguments.

The overlay function is designed to allow users to create a global array on top of an existing global array. This can be used in situations where it is desirable to create and destroy a large number of global arrays in rapid succession and where the size of these global arrays can be bounded beforehand. A large global array is created at the start using conventional create or allocate calls and then is used as the parent for new global arrays that are allocated using the overlay call. This approach removes some collectives and memory allocation and deallocation calls from the process of creating a global array and should result in improved performance. Note that the parent global array should not be used while another array is overlayed on top of it.

Collective on the processor group inferred from the arguments.

ga.pack(int g_src, int g_dst, int g_msk, lo=None, hi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_src | handle for source arrray | input |

| int | g_dst | handle for destination array | input |

| int | g_msk | handle for integer array representing mask | input |

| 1D array-like of ints | lo | low value of range on which operation is performed | input |

| 1D array-like of ints | hi | hi value of range on which operation is performed | input |

Collective on the processor group inferred from the arguments.

The pack subroutine is designed to compress the values in the source vector g_src into a smaller destination array g_dest based on the values in an integer mask array g_mask. The values lo and hi denote the range of elements that should be compressed and icount is a variable that on output lists the number of values placed in the compressed array. This operation is the complement of the GA_Unpack operation. An example is shown below

GA_Pack(g_src, g_dest, g_mask, 1, n, &icount); g_mask: 1 0 0 0 0 0 1 0 1 0 0 1 0 0 1 1 0 g_src: 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 g_dest: 1 7 9 12 15 16 icount: 6

The current implementation requires that the distribution of the g_mask array matches the distribution of the g_src array.

Collective on the processor group inferred from the arguments.

This subroutine enumerates the values of an array between elements lo and hi starting with the value start and incrementing each subsequent value by inc. This operation is only applicable to 1-dimensional arrays. An example of its use is shown below:

GA_Patch_enum(g_a, 1, n, 7, 2); g_a: 7 9 11 13 15 17 19 21 23 ...

ga.periodic_acc(int g_a, buffer, lo=None, hi=None, alpha=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| array-like | buffer | the data to put | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| object | alpha | scale factor, cast to the appropriate type | input |

One-sided (non-collective).

Same as nga_acc except the indices can extend beyond the array boundary/dimensions in which case the library wraps them around. For Python, this is the periodic version of ga.acc.

Combines data from buffer with data in the global array patch.

The buffer array is assumed to be have the same number of dimensions as the global array. If the buffer is not contiguous, a contiguous copy will be made.

global array section (lo[],hi[]) += alpha * buffer

ret = ga.periodic_get(int g_a, lo, hi, buffer, alpha=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

| ndarray | buffer | an ndarray of the appropriate type, large enough to hold lo,hi | input |

| ndarray | ret | local buffer array where the data goes to | output |

One-sided (non-collective).

Same as nga_get except the indices can extend beyond the array boundary/dimensions in which case the library wraps them around.

The local array is assumed to be have the same number of dimensions as the global array. Any detected inconsitencies/errors in the input arguments are fatal.

Returns: The local Array buffer.

ga.periodic_put(int g_a, buffer, lo=None, hi=None)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | g_a | the array handle | input |

| array-like | buffer | the data to put | input |

| 1D array-like of ints | lo | lower bound patch coordinates, inclusive | input |

| 1D array-like of ints | hi | higher bound patch coordinates, exclusive | input |

One-sided (non-collective).

Same as nga_put except the indices can extend beyond the array boundary/dimensions in which case the library wraps them around. The indices can extend beyond the array boundary/dimensions in which case the libray wraps them around. Copies data from local array buffer to the global array section. The local array is assumed to be have the same number of dimensions as the global array. Any detected inconsitencies/errors in input arguments are fatal.

ret = ga.pgroup_brdcst(int pgroup, buffer, int root=0)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | pgroup | processor group handle | input |

| 1D array-like of objects | buffer | the ndarray message (converted to the appropriate type) | input |

| int | root | the process which is sending | input |

Collective on the processor group inferred from the arguments.

Broadcast data from processor specified by root to all other processors in the processor group specified by p_handle. The length of the message in bytes is specified by lenbuf. The initial and broadcasted data can be found in the buffer specified by the pointer buf.

If the buffer is not contiguous, an error is raised. This operation is provided only for convenience purposes: it is available regardless of the message-passing library that GA is running with.

Returns: The buffer in case a temporary was passed in.

ret = ga.pgroup_create(list)

| Type | Name | Description | Intent |

|---|---|---|---|

| 1D array-like of ints | list | list of processor IDs in group | input |

| int | pgroup | pgroup handle | output |

Collective on the default processor group.

This command is used to create a processor group. At present, it must be invoked by all processors in the current default processor group. The list of processors use the indexing scheme of the default processor group. If the default processor group is the world group, then these indices are the usual processor indices. This function returns a process group handle that can be used to reference this group by other functions.

ret = ga.pgroup_destroy(int pgroup)

| Type | Name | Description | Intent |

|---|---|---|---|

| int | pgroup | processor group handle | input |

| bool | ret | False if processor group was not previously active | output |

Collective on the processor group inferred from the arguments.

This command is used to free up a processor group handle. It returns 0 if the processor group handle was not previously active.

Return a copy of an existing processor group.

Collective on the processor group inferred from the arguments.

ret = ga.pgroup_get_default()

Local operation.

This function will return a handle to the default processor group, which can then be used to create a global array using one of the NGA_create_*_config or GA_Set_pgroup calls.

ret = ga.pgroup_get_mirror()

Local operation.