IPPD: Integrated End-to-End Performance Prediction and Diagnosis for Extreme Scientific Workflows

It is increasingly difficult to design, analyze, and implement large-scale workflows for scientific computing especially in situations where time critical decisions have to be taken. Workflows are designed to execute on a loosely connected set of distributed and heterogeneous computational resources. Each computational resource may have vastly different capabilities, ranging from sensors to high performance clusters. Frequently, workflows are composite applications built from loosely connected parts. Each task of a workflow may be designed for a different programming model and implemented in a different language. Most workflow tasks communicate via files sent over general purpose networks. As a result of this complex software and execution space, large-scale scientific workflows exhibit extreme performance variability. It is critically important to have a clear understanding of the factors that influence their performance and for the potential optimization of their execution.

The performance of a workflow is determined by a wide range of factors. Some are specific to a particular workflow component and include both software factors (application, data sizes etc.) and hardware factors (compute nodes, I/O, network). Others stem from the combination and orchestration of the different tasks in the workflow including: the workflow engine, the mapping of the workflow onto the distributed resources, co-ordination of tasks and data organization across programming models, and workflow component interaction.

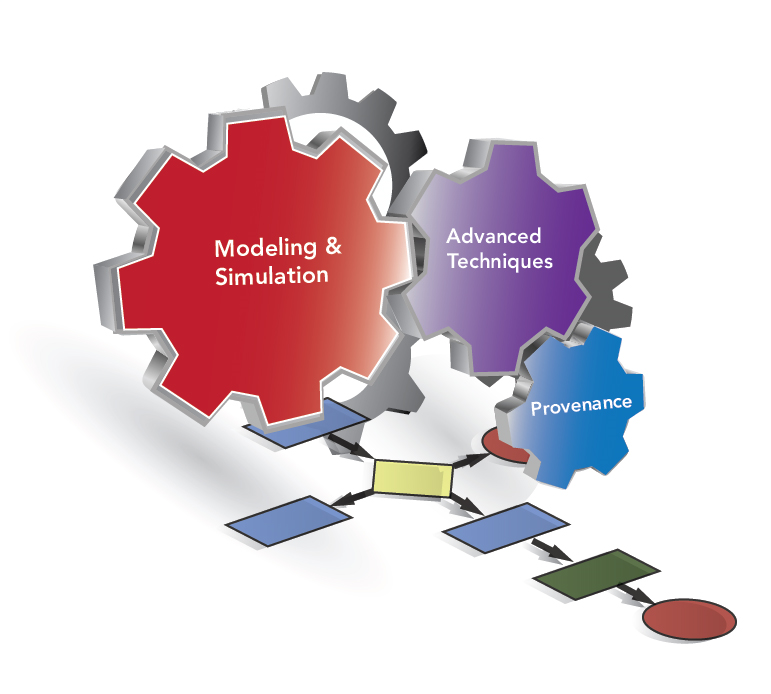

In IPPD three core issues are being addressed in order to provide insights into workflow execution that can be used to both explain and optimize their execution:

- provide an expectation of the performance of a workflow in-advance of execution to provide a best baseline performance;

- identify areas of consistent low performance and diagnose the reason why; and

- study the important issue of performance variability.